In this post I will show you how to bypass Windows Hello based authentication in some Windows desktop apps.

If you already know Windows Hello, you may want to skip to the description of the flaw.

You can also skip to the:

Introduction to Windows Hello 🔗

Windows Hello is a recent addition to Windows OS that enables biometry authentication. It was previously (Windows 7 / 2008 R2 period) called Windows Biometric Framework.

Windows Hello is now an umbrella term for everything-biometry. It brings together the old “Windows Hello” and “Microsoft Passport”.

When Windows 10 first shipped, it included Microsoft Passport and Windows Hello, which worked together to provide multi-factor authentication. To simplify deployment and improve supportability, Microsoft has combined these technologies into a single solution under the Windows Hello name. (source)

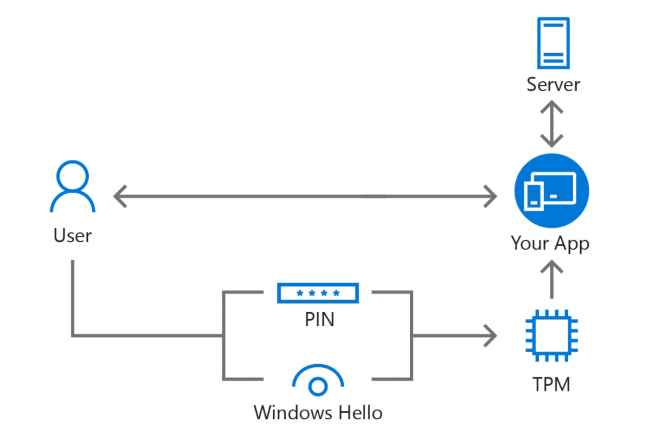

Biometry in Windows Hello does not consist in adding a second biometric factor to an ordinary password. It completely replaces the password (#passwordless!) and it is also considered Two-Factor Authentication (1x for biometry, 1x for the device through its TPM).

There is also Windows Hello for Business which has some differences (including a dedicated Microsoft Passport Key Storage Provider) but we will not go into details.

For years we have been conditioned to see biometry as a stronger authentication method. Therefore, we naturally tend to put more trust into systems that leverage it. I have found that in some implementations based on Windows Hello it is unfortunately only smoke and mirrors. It looks shiny and futuristic, but it is less secure than less discussed alternatives derived from passwords such as DPAPI.

Please note that the focus here is on Windows desktop applications using biometry, through Windows Hello, to authenticate their users. This is not related to using Windows Hello to open a Windows session.

Windows Hello as an authentication method for remote apps 🔗

By “remote apps”, I mean desktop applications that are in fact clients to remote services, i.e. APIs / web services. The remote server holds the data to protect and has its own users database. It is in charge of verifying the users’ authentication and delivering access accordingly.

In this scenario, Windows Hello can be used to authenticate to the remote service without a password but with biometry instead. It works very similarly to WebAuthn / FIDO2 (if you can read French, I have written an article about it in the MISC magazine, see: “MISC : « WebAuthn » : enfin la fin des mots de passe ?”), in fact Windows Hello can be used as a WebAuthn authenticator.

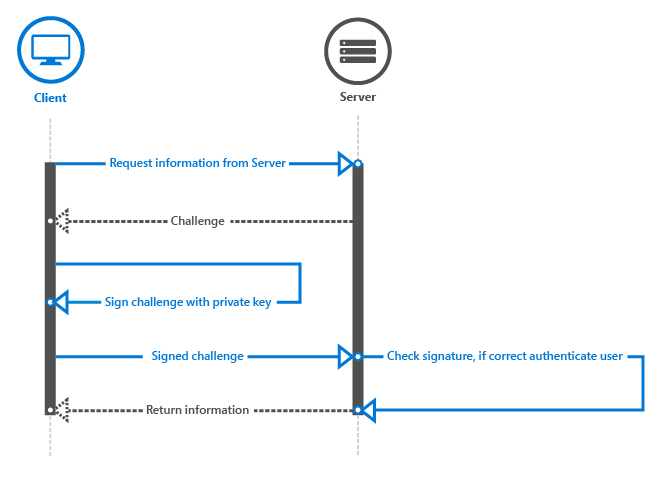

Windows Hello authentication for remote apps works with a challenge/response mechanism, based on public key cryptography.

Account creation flow 🔗

- A public-private key pair is generated on the device

- The private key is stored securely locally in the TPM

- The public key is sent to the remote service and stored as the user’s authenticator for this device

Authentication flow 🔗

- Users open the desktop app, type their login and choose to use Windows Hello instead of a password

- The server generates a random challenge sent to the client

- The client asks the TPM to unlock the private key, through a biometric verification or PIN code, and use it internally to sign the challenge

- The server receives the response and compares the signature with the stored public key

Here is how Microsoft explains it:

Microsoft Passport takes the PIN or biometric information from Windows Hello (if available), and uses this information to have the TPM-chip generate a set of public-private keys.

The private key remains secured by the TPM; it cannot be accessed directly, but can be used for authentication purposes through the Microsoft Passport API. The public key is used with authentication requests to validate users and to verify the origin of the message. (source)

APIs 🔗

Windows offers the KeyCredentialManager class that provides methods for all of these steps:

- Check if the user is set-up for Windows Hello:

KeyCredentialManager.IsSupportedAsync - Create keys pair:

KeyCredentialManager.RequestCreateAsync - Get public key:

KeyCredentialManager.RetrievePublicKey - Sign challenge:

KeyCredentialManager.RequestSignAsync

The Windows.Security.Credentials.KeyCredentialManager Class is implemented in “%windir%\System32\KeyCredMgr.dll” which description is “Microsoft Key Credential Manager”

If you want to know more, refer to:

- The detailed technical whitepaper: https://docs.microsoft.com/en-us/windows/uwp/security/microsoft-passport#34-authentication-at-the-backend

- A code sample: https://developer.microsoft.com/en-us/windows/campaigns/windows-dev-essentials-adding-biometric-authentication-with-windows-hello

The Microsoft articles are heavily oriented towards using Windows Hello in UWP (Universal Windows Platform) applications, but the APIs work with classic desktop apps too since they have the DualApiPartitionAttribute.

Windows Hello as an authentication method for local apps 🔗

By “local apps”, I mean standalone / client-only / offline applications. They cannot reach a server and they usually store themselves the data to protect. We know that security in this context is hard, since we must assume that a malicious user, or an intruder, has all control over the device. They can tamper with all security mechanisms especially when they are implemented, as we call it, “client-side” (even if there is no “server-side” here).

DPAPI (Data Protection API, see also my article on its usage in Chrome) has some flaws and interesting research was published about it. In a summary, it allows to protect data with cryptographic keys derived from the user’s password. If an attacker finds a computer with an unencrypted drive, or manages to bypass the authentication (e.g. through a remote code execution vulnerability such as MS17-010/EternalBlue), they will not be able to decrypt the protected data as long as they do not know or guess the session owner’s password.

It should be at least the same with Windows Hello. We must be able to assume that the data is protected with secrets derived from the owner’s biometry features and that the biometry step cannot be skipped.

Bypass flaw when using UserConsentVerifier 🔗

I have discovered that many apps use a misleading Windows Hello API that does not offer this security property. The data protection is not really bound to Windows Hello, it is only a client-side check that can be skipped and bypassed, given access to the device (local/physical attacker).

Let’s get a look again at the “3.3 Force the user to sign in again” section of the Microsoft detailed technical whitepaper:

For some scenarios, you may want the user to prove he or she is the person who is currently signed in, before accessing the app or sometimes before performing a certain action inside of your app. For example, before a banking app sends the transfer money command to the server, you want to make sure it is the user, rather than someone who found a logged-in device, attempting to perform a transaction. You can force the user to sign in again in your app by using the UserConsentVerifier class.

[…]

Of course, you can also use the challenge response mechanism from the server, which requires a user to enter his or her PIN code or biometric credentials.

The last point would be nice, but we agreed that this cannot be done in the context of local apps.

The Windows.Security.Credentials.UI Namespace has the UserConsentVerifier Class.

On your computer, you can find it implemented in “%windir%\System32\Windows.Security.Credentials.UI.UserConsentVerifier.dll” which description is “Windows User Consent Verifier API”.

The interesting method here is UserConsentVerifier.RequestVerificationAsync.

Performs a verification using a device such as Microsoft Passport PIN, Windows Hello, or a fingerprint reader.

You can use the RequestVerificationAsync method to request user consent for authentication. For example, you can require fingerprint authentication before authorizing an in-app purchase, or access to restricted resources.

This method returns a UserConsentVerificationResult enumeration which tells if the biometry verification is confirmed, or if an error occurred.

Note that I did not discover a vulnerability in UserConsentVerifier itself, but instead a flaw in how it is used by many applications.

I have found two examples of vulnerable applications on GitHub. I will not name them directly, even if I give links to the repositories, since they are only examples among others and I do not want to point finger.

Example #1 🔗

This application is a client for a cloud service. The app allows to login with Windows Hello. To do that, the user’s login and password for the service are stored. Let’s see how.

When the app launches, in the OnLaunched method of App.xaml.cs, there is a call to WindowsHello.VerifyAsync

if (Credentials.Exists() && (!Settings.Local.UseWindowsHello || await WindowsHello.VerifyAsync()))

WindowsHello.VerifyAsync relies on UserConsentVerifier.RequestVerificationAsync

public static async Task<bool> VerifyAsync()

{

var availability = await UserConsentVerifier.CheckAvailabilityAsync();

if (availability != UserConsentVerifierAvailability.Available)

await new MessageDialog(Localization.HelloUnavialable, Localization.Warning).ShowAsync();

else

{

while (true)

{

var result = await UserConsentVerifier.RequestVerificationAsync(Localization.HelloAuth);

If the UserConsentVerifier return is positive, it calls Credentials.AuthenticateAsync

if (Credentials.Exists() && (!Settings.Local.UseWindowsHello || await WindowsHello.VerifyAsync()))

{

Seafile = await Credentials.AuthenticateAsync();

Credentials.AuthenticateAsync relies on encrypted settings, but how are they encrypted?

The EncryptedSettings class relies on the EncryptedSettingsProvider class

public EncryptedSettings()

{

SettingsProvider = new EncryptedSettingsProvider();

}

It has an internal class for Encrypt and Decrypt operations.

private static class Crypt

{

private const string Salt = nameof(FourSeafile);

public static byte[] Encrypt(string plainText, string key)

{

var pwBuffer = CryptographicBuffer.ConvertStringToBinary(key, BinaryStringEncoding.Utf8);

var saltBuffer = CryptographicBuffer.ConvertStringToBinary(Salt, BinaryStringEncoding.Utf16LE);

var plainBuffer = CryptographicBuffer.ConvertStringToBinary(plainText, BinaryStringEncoding.Utf16LE);

It relies on AES_CBC_PKCS7 for encryption and PBKDF2_SHA1 for key derivation which seems fine. But where do the salt and key come from?

The salt is the name of a class.

private const string Salt = nameof(FourSeafile);

The key is the device ID which comes from EasClientDeviceInformation().Id which is not secret either.

private string _deviceId = GetDeviceId();

[...]

Crypt.Encrypt(value.ToString(), _deviceId)

[...]

private static string GetDeviceId()

=> new EasClientDeviceInformation()

.Id.ToString();

So in summary, attackers could directly decrypt the settings now that they know that they are protected using only readable data. But they could also patch the application, or the Windows Hello API, to skip the verification and continue on to the rest of the code.

I reported privately this vulnerability to the author who fixed it and was really nice to work with 🙂 In a commit, among other things, the salt is now generated by KeyCredential.RequestSignAsync where biometric verification cannot be skipped.

Example #2 🔗

(I will disclose less details on this example, as it is not fixed yet)

This application is a credential manager (password vault) that allows to unlock the vault either with a passphrase or with Windows Hello. User can select between passphrase and Windows Hello. If Windows Hello is used, the UserConsentVerifier class is used.

If the return from UserConsentVerifier.RequestVerificationAsync is positive, then the passphrase is fetched from the PasswordVault. PasswordVault being the standard Windows password vault, protected with DPAPI.

So in summary, attackers could directly get the passphrase from the Windows password vault (e.g. with vault::cred in Mimikatz and similar tools) when running in the context of the target user, thus bypassing completely the biometry step.

This type of weak solution, mixing the PasswordVault and UserConsentVerifier, is proposed on stackoverflow even if the question’s author rightly refused it for not being secure enough.

The project owner was very quick to answer and considered my notice 🙂. But they decided a few months ago not to fix this, for example by using a machine unique key. Because current users could lose access to their current passwords and that the synchronization across devices feature would not work anymore.

What is the solution then? 🔗

Now that the problem is clear, we need to propose a solution! Is there a way to use Windows Hello to protect credentials and data that does not rely on the weak UserConsentVerifier?

I only found one interesting post, on StackOverflow: “store password with Windows Hello”

Both is not very useful for my use-case: I want a symmetric way to store and retrieve the password.

My question in short:

Is there a way to symmetrically store data with Windows Hello?

The proposed solution, from the question’s author, is interesting. The idea is:

encrypting / decrypting the secret I wanted to store using a password generated with Windows Hello. The password was a signature over a fixed message

This is a nice trick! It uses the signature mechanism locally to obtain an encryption key correctly tied to the biometry and the TPM. The author used this solution in one project, see how he implemented it in its LoginService.

If you are a cryptography expert and know that this violates some best practices, or if you have simply found a way to crack this scheme, please speak up!

Note about the considered threat model 🔗

Some people will argue that as long as someone has control of your computer, it is not your computer any more, and the game is already over. It relates to some of the 10 Immutable Laws Of Security proposed by Microsoft (version 2.0):

1. If a bad guy can persuade you to run his program on your computer, it’s not solely your computer anymore.

2. If a bad guy can alter the operating system on your computer, it’s not your computer anymore.

3. If a bad guy has unrestricted physical access to your computer, it’s not your computer anymore.

I agree with that and I have no intention to fight this. However, I think that once a computer is in the hands of a bad guy, they should not be able to access the data that is normally protected. This is why we have disk encryption, DPAPI, master passwords on password vaults, etc.

These additional protections do not withstand a backdooring, e.g. when the attacker modify the programs or the OS first, then let you use it normally and finally you type your master password that is keylogged. But data protected with Windows Hello must be able to withstand a cold attack against an unencrypted disk extracted from the device, or an attack from an active attacker within an unlocked Windows session but with a locked password manager for example. Unfortunately, this is not the case in the previous examples.

Which resonates well with another of these 10 Immutable Laws Of Security:

1. Encrypted data is only as secure as its decryption key.

This attack works against unencrypted computers, and in this case, the security level is lower than when using DPAPI. At least, DPAPI derives its secrets from the user’s password so we cannot bypass it, if the user is not currently logged in, or does not a have a weak password.

Key takeaways for pentesters and security engineers 🔗

If you are testing an application that advertises support for Windows Hello as an authentication method, it must attract your interest after reading this!

Use whatever means you have (source-code review, API monitoring, reverse engineering…) to see how Windows Hello is actually called.

💡 If you see a reference to UserConsentVerifier, and more specifically UserConsentVerifier.RequestVerificationAsync, this is definitely a red flag! It usually means that there is a simple client-side check that can be circumvented, and there is actually a way, a few lines below, to get the protected information anyway.

This is more likely to be the case with standalone / client-only / offline applications since they cannot perform a handshake authentication (challenge/response) with a server that would protect the information.

Key takeaways for developers 🔗

I hope to have you convinced that you must not use UserConsentVerifier.RequestVerificationAsync to protect secrets. If the code chains a first function to check the biometric and a second function to somehow decrypt the secret data, and if the second function would still work if an attacker skip the first one, then it is not secure.

What you need, is a way to bind the key protecting the data with the user’s biometry. As explained above, one way would be to use the signature mechanism of Windows Hello, to sign a static challenge and use it as a symmetric encryption key to protect the data.

Call to Microsoft 🔗

I would hope that Microsoft had better explained how this new promising API could be used securely. And in particular, how it is not a perfect fit yet in some conditions… I tried to report it to MSRC but obviously it is not a security vulnerability and they discarded it. Unfortunately I do not know where to suggest it instead…

Also, I think that Microsoft should give developers a proper and official API to protect data and encryption keys locally on the device. Because right now there is a need and we see an ecosystem of tutorials, code samples, and libraries, that allows developers to build applications that look secure but are actually built on sand. Tell me if I missed something! 😉

Further research in a future article 🔗

In a follow-up article, I will dive into how UserConsentVerifier works internally, and more specifically how it leverages Windows Hello and other existing Windows crypto and credential APIs to do its magic!

I still need to do some research and gather my notes. Stay tuned and if you have information please contact me.

In the meantime, I have compared Windows Hello with other solutions such as Touch ID and Face ID of the Apple ecosystem, and similar tools in Android. Head over to Security pitfalls in authenticating users and protecting secrets with biometry on mobile devices (Apple & Android)